Virtual production

MagicLoom Studio pioneers the next frontier in filmmaking through cutting-edge virtual production processes. Leveraging advancements in computing power, VFX expertise, and game-engine technology, we empower filmmakers to create immersive environments that seamlessly interact with live-action, enabling real-time decision-making and dynamic scene changes.

## Key Advantages of Virtual Production ##

1. **Real-time Interaction**: Environments interact with live-action, allowing filmmakers to make immediate decisions and changes.

2. **Dynamic Backgrounds**: Backgrounds move in full perspective with the camera’s position, updating in real-time as the camera moves.

3. **Seamless Transitions**: Production can effortlessly transition between convincingly detailed and photo-real environments without leaving the stage.

4. **In-Camera Final Shots**: Final shots can be captured in-camera, eliminating the need to replace backgrounds in post-production.

5. **Global Location Access**: Virtual production brings locations from around the world to the stage with compelling photo-realism.

## Defining Virtual Production ##

At its core, virtual production is a modern, agile content creation process that starts VFX development early, embracing technology throughout the entire production life cycle. It fosters flexibility, robustness, time efficiency, cost-effectiveness, and intelligence at each stage of filmmaking.

## Contrasting Traditional and Virtual Production ##

– Traditional production is linear, often leading to a “fix-it-in-post” mentality and expensive reshoots.

– Virtual production is iterative and creative, integrating VFX work in pre-production to refine the final look continuously.

## Virtual Production Pipeline Benefits ##

– **Elimination of Green Screen Keying**: Post-production teams save time by automatically correcting reflections and contact lighting without keying from green screens.

– **Directorial Control of Lighting Environment**: Directors have full control over the lighting environment, adjusting conditions and shadow angles in real-time.

– **Enhanced Actor Experience**: Actors interact more naturally with CG elements, aided by realistic visualization rather than relying solely on green screens.

– **Remote Location Scouting**: Travel costs are minimized as location scouting can be done remotely, shooting any virtual world in-house.

– **Seamless Set Extensions**: Large-scale set extensions match seamlessly with the LED backdrop in post.

Visualization

Using 3D VFX assets to plan scenes in 3D before shooting. Using 3D VFX assets to visualize and plan a task.There are many types of visualization (for example, pitchvis, techvis, and postvis), but the preeminent form is previs—planning a scene in 3D before shooting.

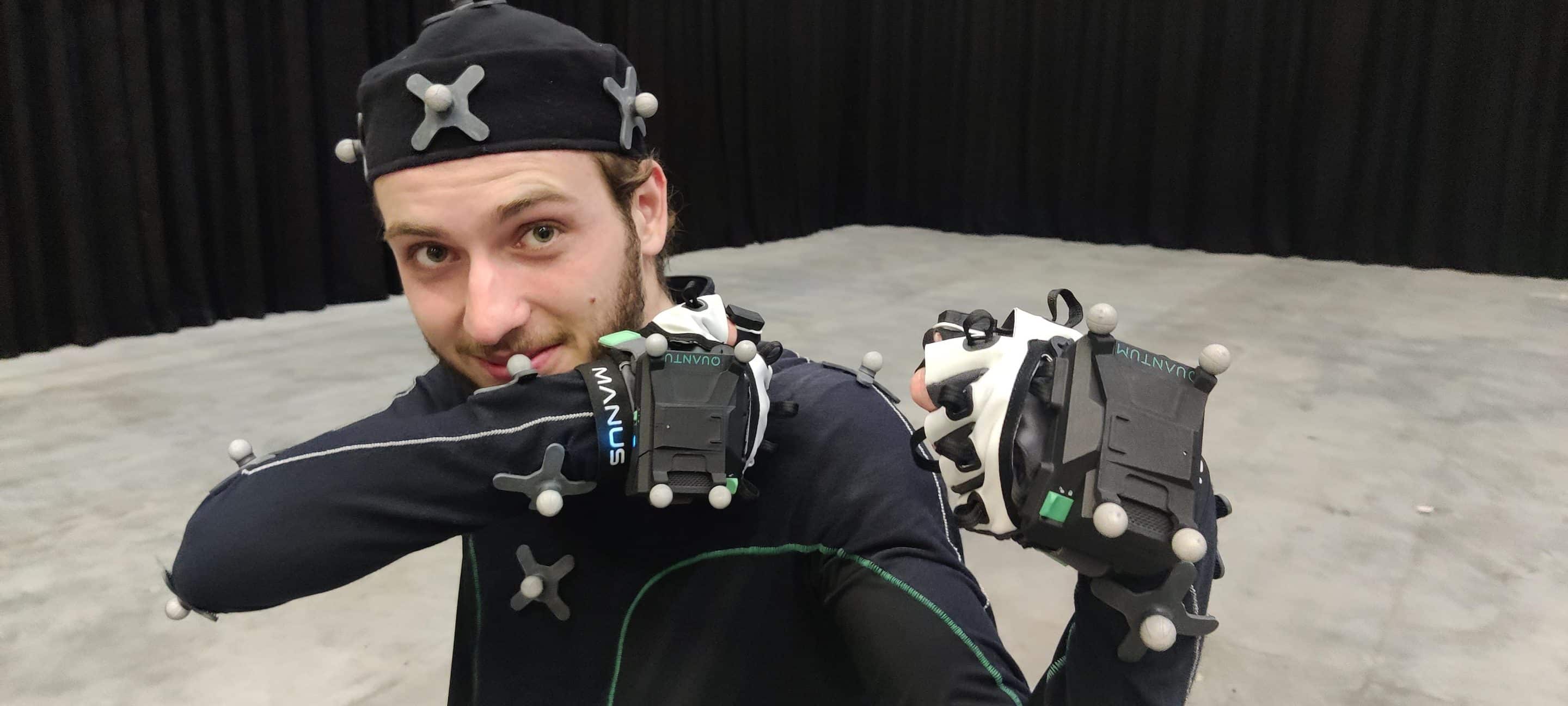

Motion capture

Capturing movements for realistic animations of digital humans and creatures. Mocap systems have been used in film since the 1980s but over time, hardware form factors have shrunk and software has become increasingly automated, leading to an increase in the breadth and depth of its use. Excellent motion capture is still technically and artistically challenging and is a critical tool for creating realistic animations of digital humans and creatures.

Hybrid camera (Simulcam, green screen hybrid)

Compositing digital VFX with live-action camera footage in real-time. Simulcam was originally developed and coined by Weta Digital and James Cameron for the movie Avatar (2009).10 It is a direct improvement over shooting against a green screen as visualizing the digital and physical simultaneously with accurate parallax helps directors gain a better spatial understanding of the scene. Actors also benefit from seeing a preliminary view of the visual effects instead of acting against a green wall.

LED live-action

Shooting against LED panels displaying final quality VFX, replacing traditional green screens. LED is a natural progression from the technique of 2D video screen projection and LED’s ability to cast light for accurate reflections is a significant benefit to post-production.

## Use Cases and Creative Empowerment ##

– **Improving Storytelling**: Visualization spans the entire production, ensuring alignment with the director’s vision.

– **Resolving Ambiguity**: Hybrid camera and LED volumes allow real-time interaction with visual effects, reducing post-production uncertainties.

– **Unlocking Possibilities**: Photogrammetry and 3D scanning enable virtualization of real-world sets, offering creative freedom.

## Business Outcomes of Virtual Production ##

– **Cost Savings**: Reducing reshoots, travel costs, and post-production VFX expenses.

– **Efficiency Boost**: Accelerating time to market by integrating VFX early in the production process.

– **Asset Reusability**: LED volumes and virtual sets can be reused for sequels, subsequent seasons, and other media, minimizing costs further downstream.

## Conclusion ##

MagicLoom Studio is at the forefront of transforming the filmmaking landscape through virtual production. By embracing technological advancements and creative ingenuity, we empower filmmakers to bring their visions to life with unprecedented flexibility, efficiency, and realism. Join us in reshaping the future of filmmaking through the magic of virtual production.

Virtual production may also have cost benefits further downstream from principal photography. LED volumes and virtual sets can be used by marketing teams to shoot commercial and VFX assets which can be reused for sequels, subsequent seasons, and other media.

While reusing digital assets is not impossible today, it is not the norm. Most organizations have many disparate digital versions of the same 3D asset because each asset is tied to an individual show and, even within a given show, production and marketing budgets are siloed. This means that catalogues of assets, VP studios collaboration and collective pipelines will slowly become the new real-time production standard for in-camera VFX.